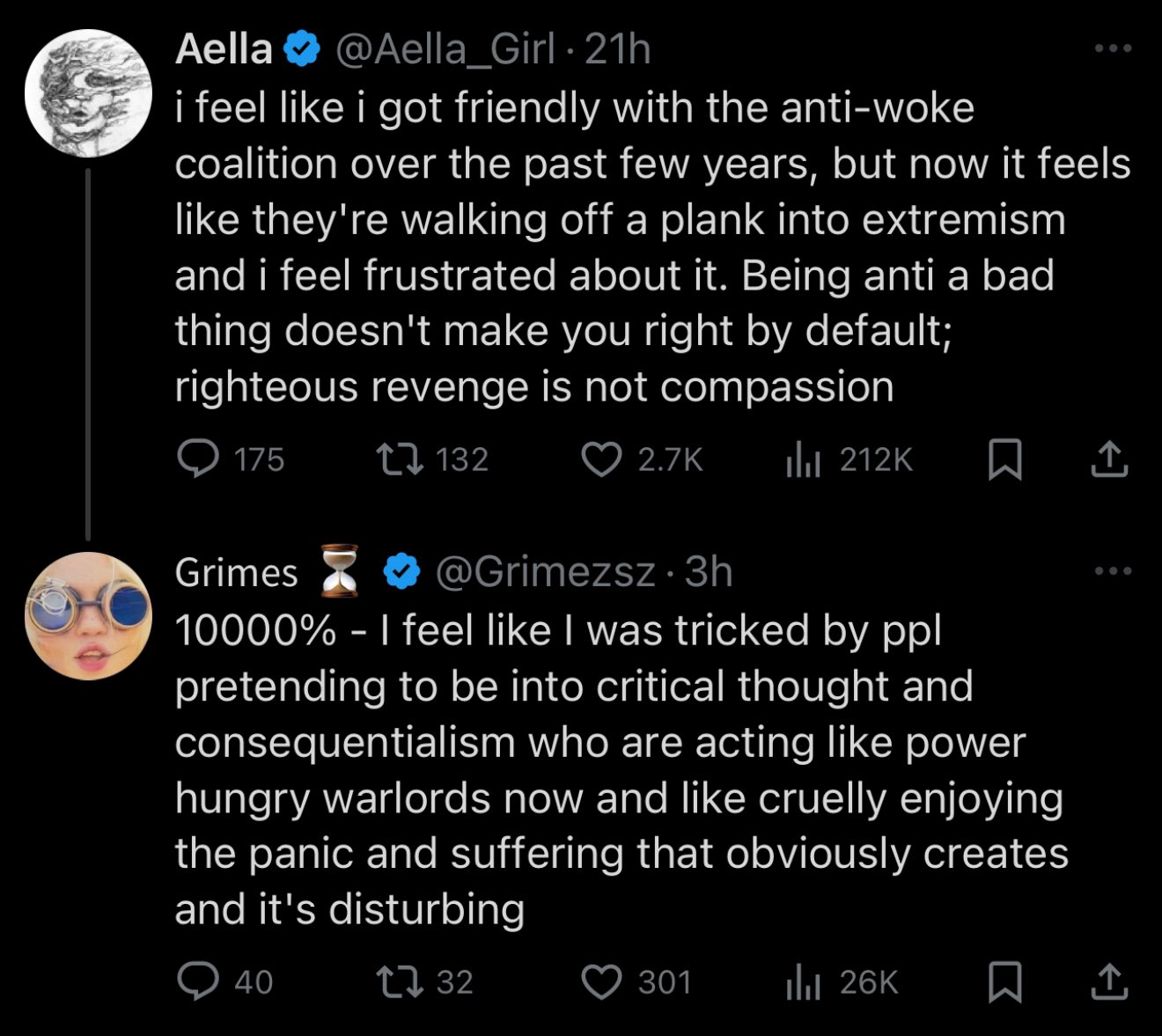

Neo-Nazi nutcase having a normal one.

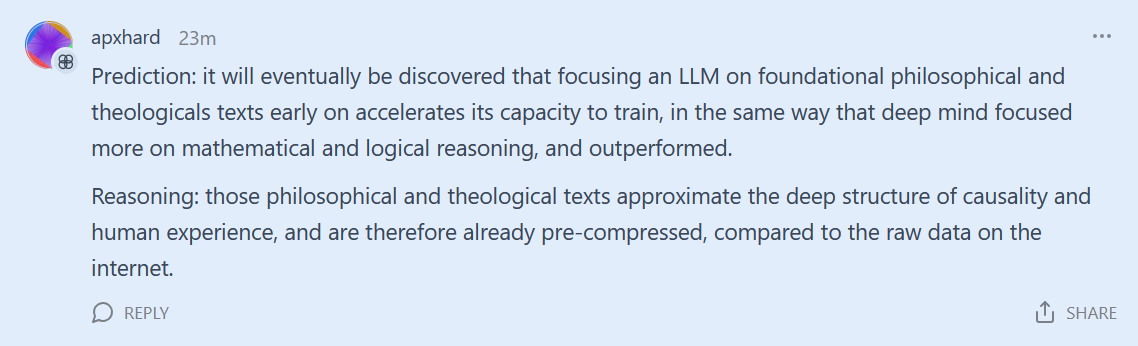

It's so great that this isn't falsifiable in the sense that doomers can keep saying, well "once the model is epsilon smarter, then you'll be sorry!", but back in the real world: the model has been downloaded 10 million times at this point. Somehow, the diamanoid bacteria has not killed us all yet. So yes, we have found out the Yud was wrong. The basilisk is haunting my enemies, and she never misses.

Bonus sneer: "we are going to find out if Yud was right" Hey fuckhead, he suggested nuking data centers to prevent models better than GPT4 from spreading. R1 is better than GPT4, and it doesn't require a data center to run so if we had acted on Yud's geopolitical plans for nuclear holocaust, billions would have been for incinerated for absolutely NO REASON. How do you not look at this shit and go, yeah maybe don't listen to this bozo? I've been wrong before, but god damn, dawg, I've never been starvingInRadioactiveCratersWrong.