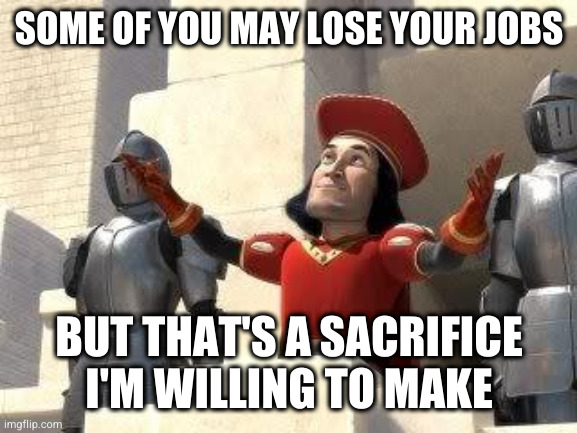

I mean if we're getting rid of jobs that don't contribute high quality, let's start replacing CEOs with AI.

She's the CTO, you're just agreeing with every person who has held that role since the beginning of time. I guarantee that's her first wish to the genie. Like, seconds after rubbing the lamp. Wouldn't even let it get to the "crick in the neck" part.

It's viable for the actual work of being a CEO, but then it becomes a "who gets to prompt it" issue.

We need a chief executive promoter

No one gets to prompt it… let it run continuously generating output/orders from the big data about company and external environment instead of prompts. The prompts are the weekly state of affairs at the company. There is one initial prompt to set it up and running. Let’s see this company crumble

That already sounds like better work than most c-suite d-bags manage

CEO isn't a job, it's a position. And yes, the CEOs office is already the one where AI use is generally starting from in corpo environments.

”It is difficult to get a man to understand something, when his salary depends on his not understanding it."

Upton Sinclair

I like how AI has all this knowledge out of nowhere without requiring input.

Great thing AI.

I fairness, that is not how knowledge works, for anyone or anything. You don't know things without input. You had an education, you receive sensory input and are able to formulate conclusions off past experiences and information. This particular argument is simply a bad faith attempt at a jab. There are much better arguments against AI.

"that is not how knowledge works, for anyone or anything. You don’t know things without input"

That's not the argument though. AI's don't "learn" in the traditional sense. Their work is purely derivative. There is no logic or creative mind. They take something that exists, simplify it into algorithms and then spit out something similar.

Tomorrow, a brand new style of whatever could become popular. Without being fed the direct reference that AI would not be able to recreate it, depending on its complexity.

If you take away the source, AI will only work in the confines of its knowledge base. If the the only other inputs AI sees, is AI outputs, entropy is inevitable.

In the same light, I think what people will eventually find is AI will net creative jobs. Which is comical. To generate enough source material for the AI to "learn" something we will end up creating more then we would have to then just creating it in the first place. And use twice the resources to do it.

Edit:

For example, ask AI to make a image in the style of into the spider verse.

Now attempt to get similar results without directly asking it to mimic into the spiderverse.

Second, using AI for creative work is by definition a down grade. It has certain capabilities but their is no comparison to actual intelligence. Good luck to the schmuck capitalists that attempt to use it as a replacement rather than a tool.

I feel as though you misunderstood. I was not defending her or AI replacing workers. I am staunchly against that and actively flight against it in my daily life. I was simply refuting the ontological basis of your argument. There are more errors in your rebuttal, but I will leave them alone.

That's a lot of words to say, "no you."

Good thing you referenced metaphysics, though, otherwise people might not get the point that you are better then me and that's the whole foundation of your argument!

Hardly. AIs like LLMs and GANs very nuch reflect their training set. This is just a fact about how they work.

If you go down that line you'll reach a point where you'll also start arguing that art/literature should also be restricted for (human) educational use. And I would rather die than ever support publishers having that power.

AI could be a great boon. AI could give us space to do less of which we dont. However AI will belong to the rich. The masses will be forced to do ever more degrading service jobs like we see springing up now.

I hope i am wrong.

There's a pretty big free open source community around AI and many of the best models are completely free.

It's ironic a bit because I'm guessing big companies like openai are the ones pushing the AI is theft issue since open source wouldn't be able to afford the price asked by data aggregators like Reddit, Getty, Adobe, etc. Sadly, getting paid was never in the cards for individual artists, most of the data is owned by specific websites.

In the same vein, OpenAI was lobbying for Congress to enact laws because AI could be "dangerous". It's quite clear they wanted to essentially outlaw any competition from small organisations by pilling on even more costs.

So yes, they might end up owning all of AI and by extent the economy, but we'd have to let ourselves be manipulated into giving it to them. Sadly a lot of people only deal in emotive knee jerk reactions so it might work.

Especially people here and on the Reddit equivalent of this community.

Nobody's out here defending gigacorpos or grifters like Musk etc., and yet luddites always want to turn this consequence of capitalism into some culture war, because all they can really think of to do about any of this is bully the nerds, the queers and the neurodivergent for knowing more about technology than they do.

Fines for the poor are fees for the rich. When breaking laws becomes an investment, the laws become a hurdle to prevent competition.

"should" by what metric? We have creative work because we want to, not because we must

So, to be fair, the line after the quote refers specifically to those that produce "low quality" output.

So a charitable but not unreasonable read might be that she's saying any creative role that's easily replaced with AI isn't really a loss. In some cases, when we're talking about artists just trying to make a living, this is really some vile shit. But in the case of email monkeys in corporations and shitty designers and marketeers, maybe she's got a point along the same lines as "bullshit jobs" logic.

On the other hand, the tech industry’s overriding presumption that disruption by tech is a natural good and that they're correctly placed as the actuators of that "good" really needs a lot more mainstream push back. It's why she felt comfortable declaring some people in industries she likely knows nothing about "shouldn't exist" and why there weren't snickers, laughter and immediate rebukes, especially given the lack (from the snippet I saw) of any concern for what the fuck happens when some shitty tech takes away people's livelihoods.

If big tech's track record were laid out, in terms of efficiency, cost, quality etc, in relation to the totality of the economy, not just the profits of its CEOs ... I'm just not sure any of the hype cloud around it would look reasonable anymore. With that out of the way, then something so arrogant as this can be more easily ridiculed for the fart-sniffing hype that it so easily can be.

But in the case of email monkeys in corporations and shitty designers and marketeers, maybe she’s got a point along the same lines as “bullshit jobs” logic.

I get your overall point and don't disagree.

The thing is about that specific bit - no job is a bullshit job when it's what you rely on to pay your bills. Even if you don't like the job, even if you aren't the best at it, if it's keeping a roof over your head, having it arbitrarily erased by some technology that didn't exist 10 years ago is a pretty shitty thing. And even if that's somehow an inescapable reality of progress (and I think there is a lot of discussion that could be had about that concept) it's still shitty for her to portray that as no big deal. I don't think context makes the comment much less shitty than the headline implies.

In addition to the fact that as has been pointed out repeatedly, AI learned how to do what it does from the output of the jobs it will now destroy, and without compensation of any kind to the people who created that output.

OK, so one caveat and one outright disagreement there.

The caveat is that she herself points out that nobody knows whether the jobs created will outnumber the jobs destroyed, or perhaps just be even and result in higher quality jobs. She points out there is no rigorous research on this, and she's not wrong. There's mostly either panic or giddy, greedy excitement.

The disagreement is that no, AI won't destroy jobs it's learning from. Absolutely no way. It's nowhere near good enough for that. Weirdly, Murati is way more realistic about this than the average critic, who seems to mostly have bought into the hype from the average techbro almost completely.

Murati's point is you can only replace jobs that are entirely repetitive. You can perhaps retopologize a mesh, code a loop, marginally improve on the current customer service bots.

The moment there is a decision to be made, an aesthetic choice or a bit of nuance you need a human. We have no proof that you will not need a human or that AI will get better and fill that blank. Technology doesn't scale linearly.

Now, I concede that only applies if you want the quality of the product to stay consistent. We've all seen places where they don't give a crap about that, so listicle peddlers now have one guy proofreading reams of AI generated garbage. And we've all noticed how bad that output is. And you're not wrong in that the poor guy churning those out before AI did need that paycheck and will need a new job. But if anything that's a good argument for conusming media that is... you know, good? From that perspective I almost see the "that job shouldn't have existed" point, honestly.

The caveat is that she herself points out that nobody knows whether the jobs created will outnumber the jobs destroyed, or perhaps just be even and result in higher quality jobs. She points out there is no rigorous research on this, and she’s not wrong. There’s mostly either panic or giddy, greedy excitement.

Even if we take as settled the concept that more jobs will exist in aggregate, I'm doubtful that there's a likely path for most of the first wave (at least) of people whose jobs are destroyed into one of those jobs "created" by AI. I have nothing to back this up but my gut, however in this case I feel pretty good about that assertion. My point is that their personal tragedy at losing their job is in most cases not going to be alleviated by the new jobs created by this advancement.

We have no proof that you will not need a human or that AI will get better and fill that blank. Technology doesn’t scale linearly.

I've seen recent AI porn images and I saw what Deepdream was doing a few years ago. I don't see a reason to think we can't expect it to get better based on that. 🙂 I also acknowledge that these may be apples and oranges even more than I suspect they are.

As someone who works in IT (though as I'm sure you can tell I have no expertise whatsoever in machine learning), I still tend to strongly agree with this statement from @maegul@lemmy.ml :

On the other hand, the tech industry’s overriding presumption that disruption by tech is a natural good and that they’re correctly placed as the actuators of that “good” really needs a lot more mainstream push back.

Every industrial transition generates that, though. Forget the Industrial Revolution, these people love to be compared to that. Think of the first transition to data-driven businesses or the gig economy. Yeah, there's a chunk of people caught in the middle that struggle to shift to the new model in time. That's why you need strong safety nets to help people transition to new industries or at least to give them a dignified retirement out of the workforce. That's neither here nor there, if it's not AI it'll be the next thing.

About the linear increase path, that reasoning is the same old Moore's law trap. Every line going up keeps going up if you keep drawing it with the same slope forever. In nature and economics lines going up tend to flatten again at some point. The uncertainty is whether this line flattens out at "passable chatbots you can't really trust" or it goes to the next step after that. Given what is out there about the pace of improvement and so on, I'd say we're probably close to progress becoming incremental, but I don't think anybody knows for sure yet.

And to be perfectly clear, this is not the same as saying that all tech disruption is good. Honestly, I don't think tech disruption has any morality of any kind. Tech is tech. It defines a framework for enterprise, labor and economics. Every framework needs regulation and support to make it work acceptably because every framework has inequalities and misbehaviors. You can't regulate data capitalism the way you did commodities capitalism and that needed a different framework than agrarian societies and so on. Genies don't get put back in bottles, you just learn to regulate and manage the world they leave behind when they come out. And, if you catch it soon enough, maybe you get to it in time to ask for one wish that isn't just some rich guy's wet dream.

Think of the first transition to data-driven businesses or the gig economy.

Just a clarification: the "gig economy" was not "new" in any way, just using new technology to skirt around labor laws and find loopholes in regulations in order to claw back profits that had been "lost" to things like pensions and health coverage.

Well, yeah, that's what I'm talking about here, specifically. There was an application of technology that bypassed regulations put in place to manage a previous iteration of that technology and there was a period of lawlessness that then needed new regulation. The solutions were different in different places. Some banned the practice, some equated it with employees, some with contractors, some made custom legislation.

But ultimately the new framework needed regulation just like the old framework did. The fiction that the old version was inherently more protected is an illusion created by the fact that we were born after common sense guardrails were built for that version of things.

AI is the same. It changes some things, we're gonna need new tools to deal with the things it changes. Not because it's worse, but because it's the same thing in a new wrapper.

Thank you for clarifying. Definitely agree on this. Especially with regards to the perceived guardrails.

That’s why you need strong safety nets to help people transition to new industries or at least to give them a dignified retirement out of the workforce. That’s neither here nor there, if it’s not AI it’ll be the next thing.

I agree with most of what you wrote in this paragraph, but we have no such strong safety nets. I don't think the fact that it has happened previously is justification for creating those circumstances again now (or in the future) without concern for how it impacts people. We're supposed to be getting better as time goes by. (not that we are by many other metrics I can see on a daily basis, but as you say that's another conversation)

Genies don’t get put back in bottles, you just learn to regulate and manage the world they leave behind when they come out. And, if you catch it soon enough, maybe you get to it in time to ask for one wish that isn’t just some rich guy’s wet dream.

I also agree with this.

But, I find there is plenty of justification to push back and try to slow the proliferation of AI in certain areas while our laws and morality try to catch up.

Your "we" and my "we" are probably not the same, I'm afraid. I'm not shocked that the difference in context would result in a difference of perception, but I'd argue that you guys would need an overhaul on the regulations and safety nets thing regardless.

Fair point, this ol' nation needs a new set of spark plugs and a valve job, at a minimum. :)

Edit: DAMMIT how am I a moderator again @VerbFlow@lemmy.world? Removing myself again now.

I’m with you on that.

Thanks. I hate deliberately out of context quotes. Watching the entire interview is actually very interesting. Lots to agree and disagree with here without having to... you know, make things up.

On the jobs situation she later mentions that "the weigth of how many jobs are created, how many jobs are changed, how many jobs are destroyed, I don't know. I don't think anybody knows(...), because it's not been rigorously studied, and I really think it should be". That also comes after a comment about how jobs that are "entirely repetitive" (she repeats that multiple times) may be removed, but she clarifies that she means jobs where the human element "isn't advancing anything", which I think puts the creative jobs quote in context as well. I like how the intervewer immediately goes to "maybe we can cut QA" and you can see in her face that she goes "yeah, no, I'm gonna need those" before going for a compromise answer.

I don't agree with the perspective she puts forward about how the tools are used, I think she's being disingenuous about the long term impact and especially about the regulations and what they do to their competitors. But latching onto this out of context is missing the point.

That all makes sense. Thanks! I was tempted to watch the whole interview but realised I didn't actually care that much. I figured something like what you describe was where she was going just from the clip I watched, and of course it was obvious that rage baiting was going on here.

And exactly what you said ... many listening to the full interview will probably think she, and therefore AI generally, is actually "right" and will be valuable ... except for all the shitty ways it's gonna be used by corporations and all of the shitty and presumptive things OpenAI and others will do to win their new platform war.

I think there's plenty of rightful criticism to the things she actually says, and plenty of things she says I wouldn't take at face value because they're effectively token corporate actions to dismiss genuine concerns.

She actually gets asked in the Q&A about the IP rights of creators included in training data, and she talks about some ideas to calculate contributions from people and compensate for them, but it's all clearly not a priority and not a full solution. I'm not gonna get into my personal proposals for any of that, but I certainly don't think they're thinking about it the right way.

Also, if you REALLY want a chilling thing she says, go find the part where she says they may eventually allow people to customize the moral and political views of their chatbots on top of a standard framework, and she specifically mentions allowing churches to do that. That may be the most actually dystopian concept I've heard come out of this corner of the techbrosphere so far, even with all the caveats about locking down a common baseline of values she mentions.

Also, if you REALLY want a chilling thing she says, go find the part where she says they may eventually allow people to customize the moral and political views of their chatbots on top of a standard framework, and she specifically mentions allowing churches to do that.

eeeesh.

Who is this bitch, and how did she get into such a position of power?

If they did not exist you would not be able to train AI on them and therefore not have the AI in the first place. How does that compute?

Fuck AI

"We did it, Patrick! We made a technological breakthrough!"

A place for all those who loathe AI to discuss things, post articles, and ridicule the AI hype. Proud supporter of working people. And proud booer of SXSW 2024.