Ph.Deez nutz.

I have friends who actually have a Ph.D. It takes many years to get one and an attempt to actually better a field. People tend to trust your opinion on a subject when you have a doctorate in that field.

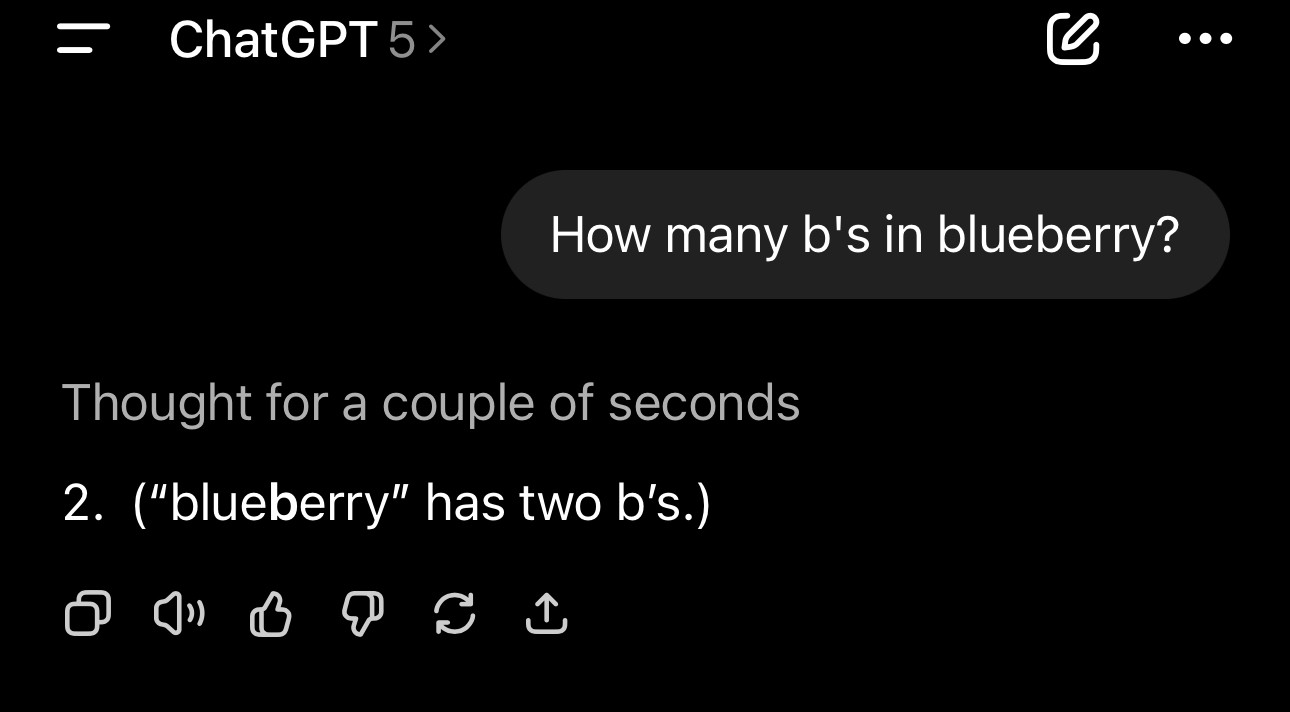

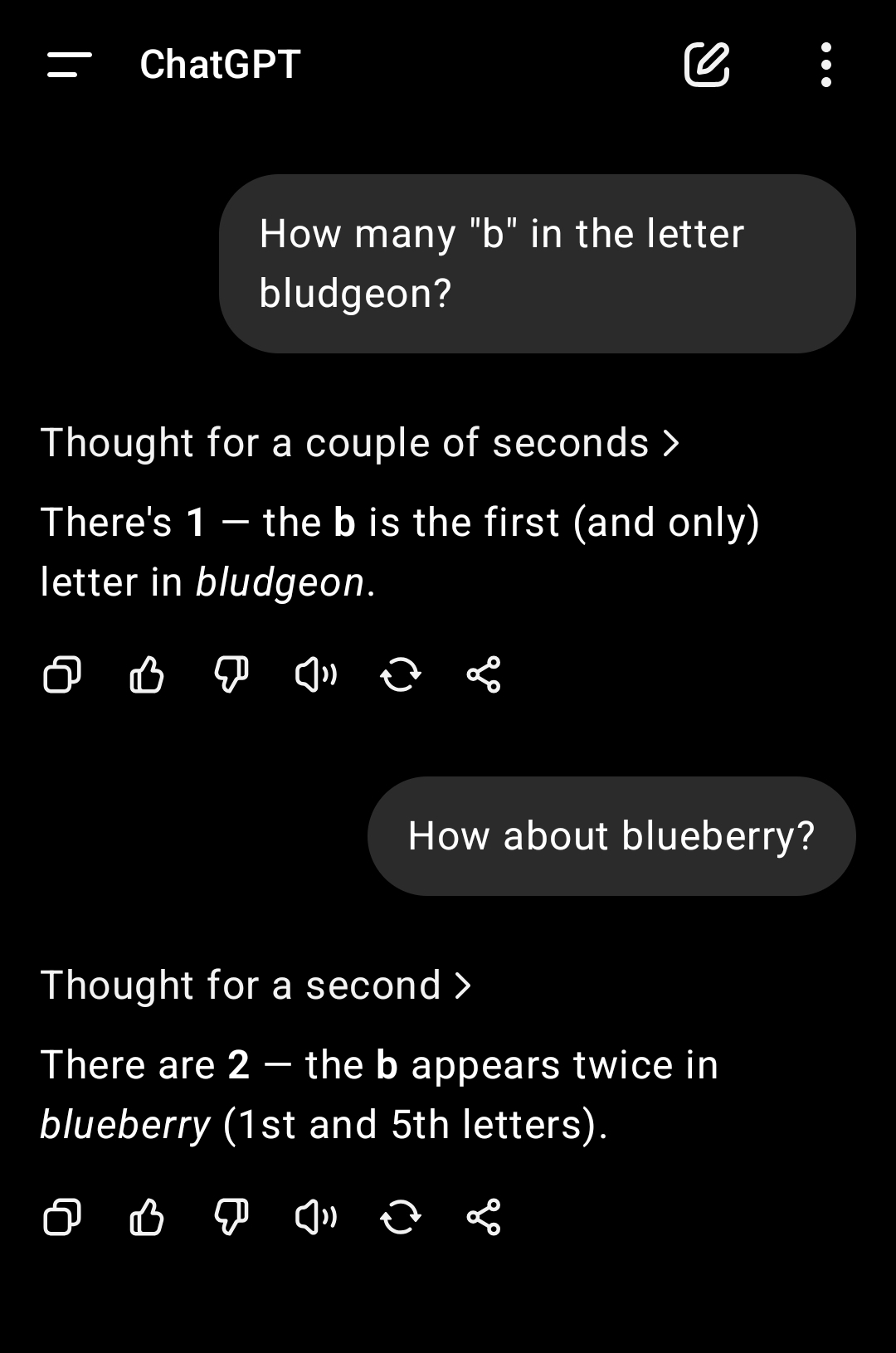

I can't even trust ChatGPT to answer a basic question without fucking up and apologizing to me, only to fuck up again.

Maybe stop treating language models like AGI? They're awesome at recognizing semantic similarities between words and phrases (embeddings) as well as generating arbitrary but reasonable looking output that matches an expected output (structured outputs). That's cool enough. Stop pretending like it isn't and falsely advertising it as being able to cure cancer and world hunger, especially when you wouldn't even be happy if it did.