Am I out of touch?

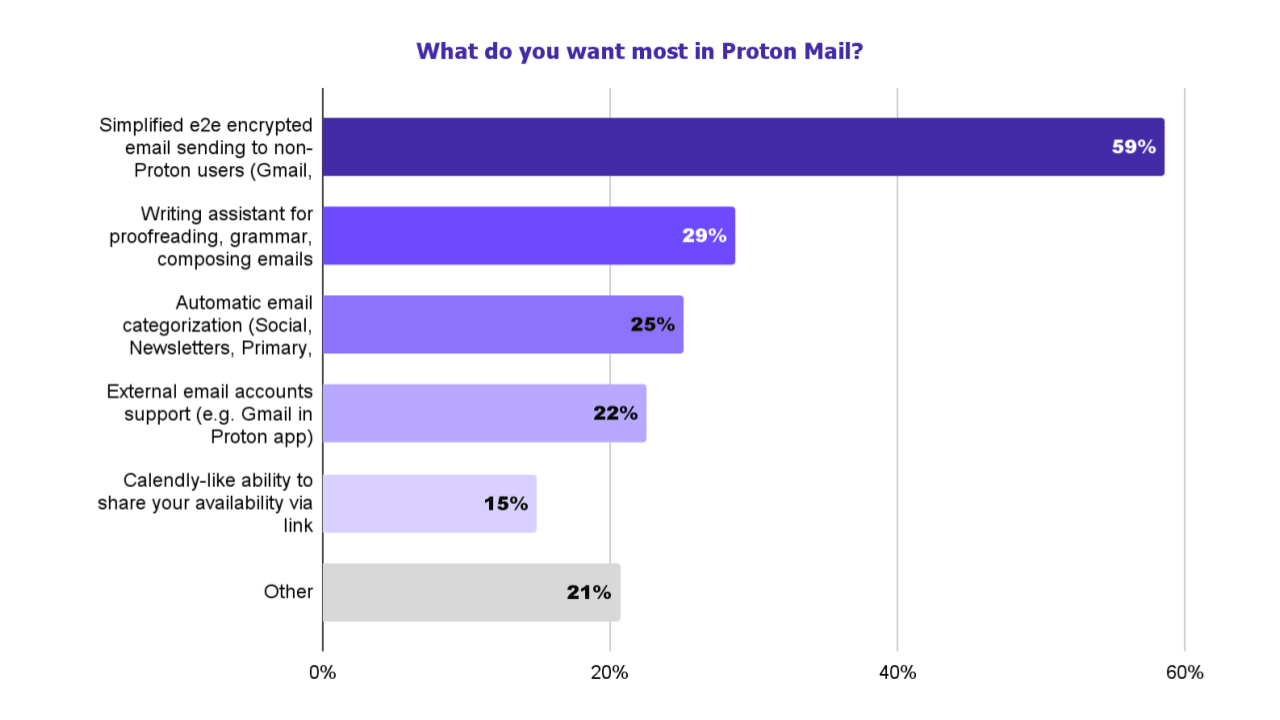

a writing assistant was one of the most requested features in our recent survey

Apparently, I am. People actually want this

For Proton Mail, 59% of respondents want an easier way to send end-to-end encrypted emails to non-Proton users, while 29% want a writing assistant for proofreading, grammar, and composing emails.

Nothing I hate more than not giving a link to the repo

Scribe relies on open source code and models, and is itself open source and therefore available for independent security and privacy audits

Not on their support page specifically for it either

Had to got to Reddit and look at their comments to find out they're using Mistral

https://reddit.com/comments/1e68sof/comment/ldsbs24

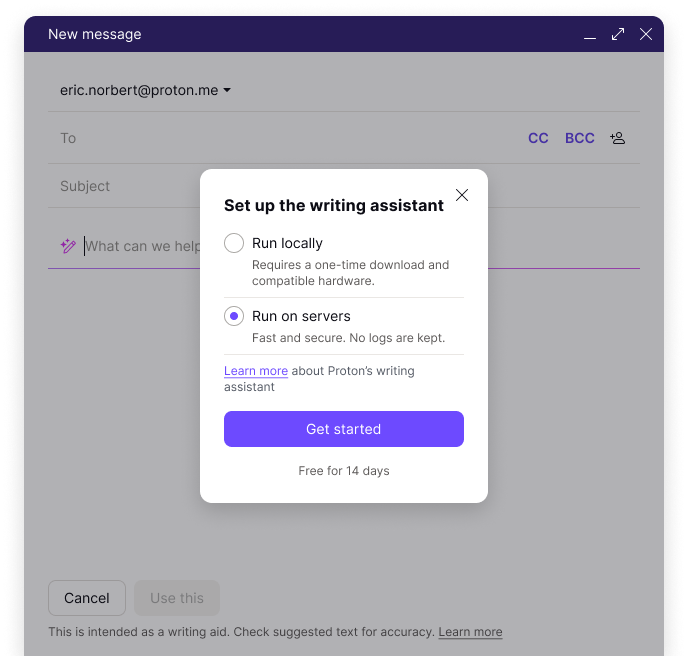

We built Scribe in r/ProtonMail using the open-source model Mistral AI to empower anyone in need of email productivity to use a privacy-respecting alternative to r/ChatGPT or r/GeminiAI that:

❌ doesn't log or save prompts

⛔️ doesn't use your data for training

🔎 open-source code that anyone can inspect

🖥️ can be run locally, so your data never leaves your device

See the official announcement here: https://proton.me/blog/proton-scribe-writing-assistant

https://huggingface.co/mistralai/Mistral-7B-v0.1/discussions/8

Hello, thanks for your interest and kind words! Unfortunately we're unable to share details about the training and the datasets (extracted from the open Web) due to the highly competitive nature of the field. We appreciate your understanding!