In a robotics lab where I once worked, they used to have a large industrial robot arm with a binocular vision platform mounted on it. It used the two cameras to track an objects position in 3 dimensional space and stay a set distance from the object.

It worked the way our eyes worked, adjusting the pan and tilt of the cameras quickly for small movements and adjusting the pan and tilt of the platform and position of the arm to follow larger movements.

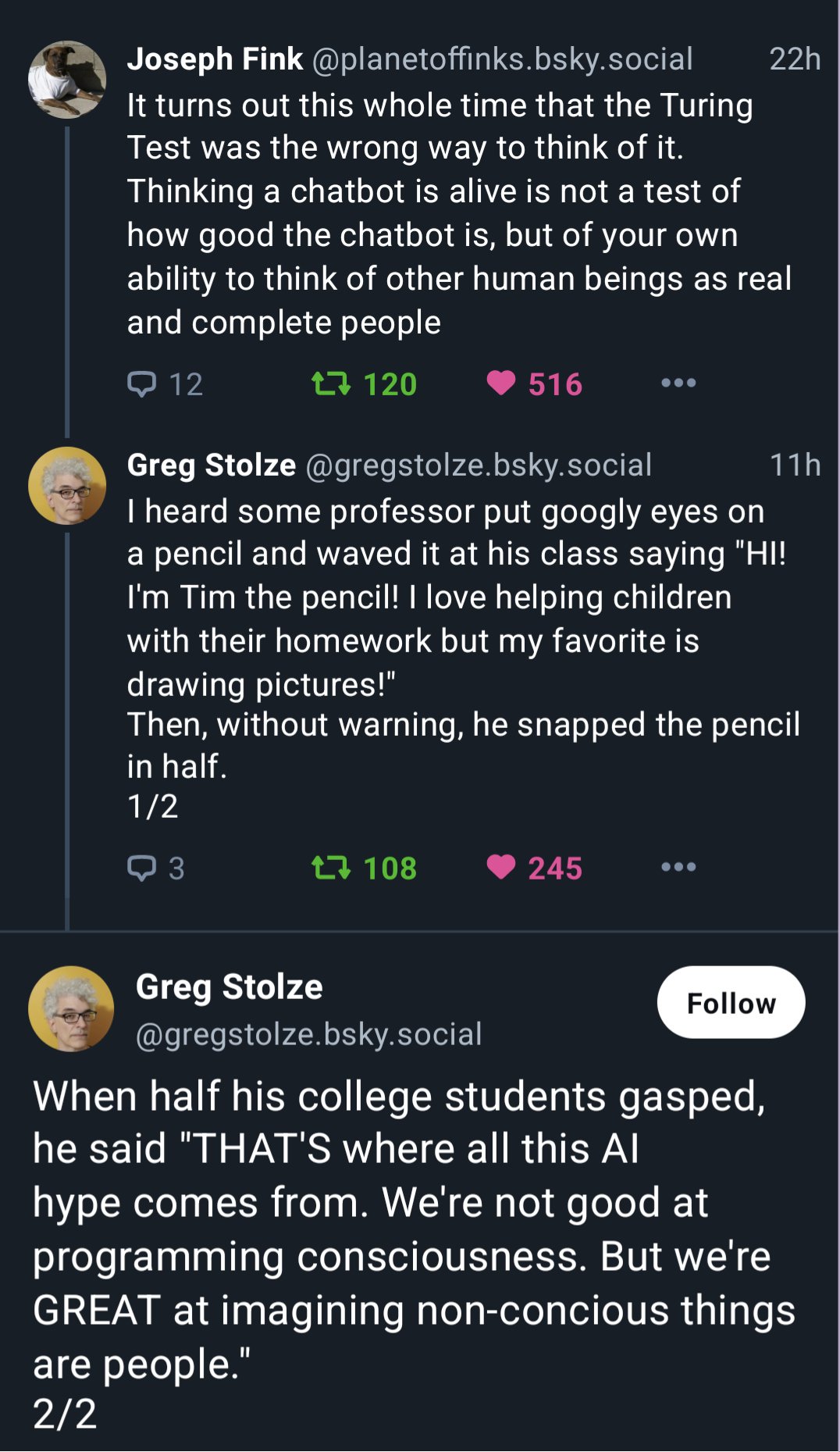

Viewers watching the robot would get an eerie and false sense of consciousness from the robot, because the camera movements matched what we would see people's eyes do.

Someone also put a necktie on the robot which didn't hurt the illusion.