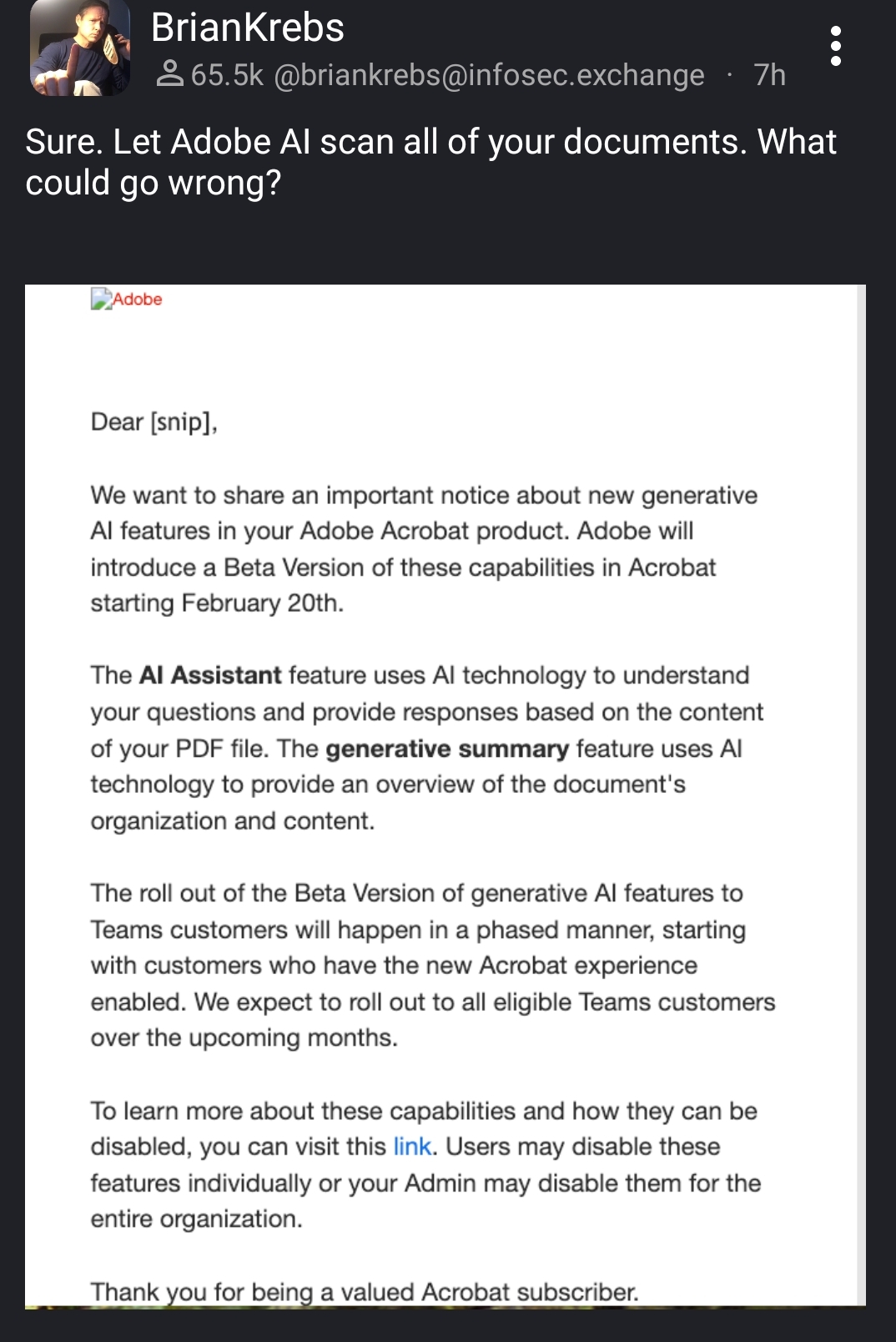

Oof... At work we deal with clients whose projects are covered by NDAs and confidentiality agreements, among other things. This is bad enough if the information scanned is siloed per organization, as it could create a situation where somebody not under NDA could access confidential client info leaked by an LLM that ingested every PDF in Adobe's cloud service without regard to distribution. Even worse if they're feeding everything back into a single global LLM -- corporate espionage becomes as simple as a bit of prompt engineering!

I highly doubt that they would be able to use private user data for training. Using data available on the internet is a bit legally grey, but using data that is not publicly available would surely be illegal. When the document is "read" by the LLM it is no longer training, so it won't store the data and be able to regurgitate it.*

* that is, if they have designed this in an ethical and legal way 🙃

They will use every scrap of data you haven’t explicitly told them not to use, and they will make it so that the method to disable these ‘features’ is little known, difficult to understand/access, and automatically re-enabled every release cycle. When they are sued, they will point to announcements like this and the one or two paragraphs in their huge EULA to discourage, dismiss, and slow down lawsuits.

All the ones I've seen that are aimed at companies have explicit terms that protect your data and don't allow it to be shared anywhere.

But that’s just like, a suggestion, man.

And it’s kind of predicated on their admins being highly proactive about data protection, because the vendors certainly aren’t.

- that is, if they have designed this in an ethical and legal way 🙃

Thus is adobe we're talking about...

A corporation that charges a monthly subscription for products it could sell outright. Offers it to students at a time when they are most likely to develop habits in it, uses a proprietary storage format that only works well with their products.

Once you get a customer addicted, you've got them for life.

Does the AI include a feature that converts the bloated, non-functional hulk of an application that is Adobe Acrobat into a usable, fit-for-purpose PDF viewer/writer/editor with a consistent interface? Oo I really hope it does, that would be really helpful.

Check out SumatraPDF. When I started a job with a ton of random PDF paperwork to fill out, I needed to find something to use. It’s awesome. And free.

If you just need to fill out forms, you can just use Firefox (and probably Chrome).

I need to do drawing markups. Bluebeam does a good job, my current company refuses to get it and insists that Acrobat Pro is functional. I feel like thats something that someone who never has to use Acrobat Pro has decided.

Wow, that's stupid. I just looked it up and it costs a few hundred per year, which is probably way less than you waste using a bad tool. If I was your manager, I'd get it for you.

zathura or evince ftw

"To learn more about the capabilities, and whether or not we'll allow you to disable them,..."

Paperless-ngx is an awesome way to self host your documents.

EULAs are intolerable. The entire concept is invalid because of blatant abuse like this.

Ya know, AI has really pushed the Cyber Crime field years into the future! Adobe made an excellent decision adding it to their suite of technology used by businesses around the world!

It's almost like they don't have enough money already.

People Twitter

People tweeting stuff. We allow tweets from anyone.

RULES:

- Mark NSFW content.

- No doxxing people.

- Must be a pic of the tweet or similar. No direct links to the tweet.

- No bullying or international politcs

- Be excellent to each other.

- Provide an archived link to the tweet (or similar) being shown if it's a major figure or a politician.