AMD's integrated GPUs have been getting really good lately. I'm impressed at what they are capable of with gaming handhelds and it only makes sense to put the same extra GPU power into desktop APUs. This hopefully will lead to true gaming laptops that don't require power hungry discrete GPUs and workarounds/render offloading for hybrid graphics. That said, to truly be a gaming laptop replacement I want to see a solid 60fps minimum at at least 1080p, but the fact that we're seeing numbers close to this is impressive nonetheless.

I was sold on AMD once I got my Steamdeck.

same here. or at least i finally recognized their potential. but it's not just the performance, it's the power efficiency too!

Everything I see about AMD makes me like them more than Intel or Nvidia (for CPU and GPU respectively). You can't even use an Nvidia card with Linux without running into serious issues.

I hope red and blue both find success in this segment. Ideally the strengthened APU share of the market exerts pressure on publishers to properly optimize their games instead of cynically offloading the compute cost onto players.

Hell yeah, I want EVERYONE to make dope ass shit. I’ve made machines with both sides, and I hate tribal…ness. My current machine is a 9900k that’s getting to be… five years old?! I’d make an AMD machine today if I needed a new machine. AMD/Intel rivalry is so good for us all. Intel slacked so hard after the 9000-series. I hope they come back.

Intel has slacked hard since the 2000-series. One shitty 4 core release after another, until AMD kicked things into gear with Ryzen.

And during that time you couldn't buy Intel due to security flaws (Meltdown, Spectre, ..).

Even now they are slacking, just look at the power consumption. The way they currently produce CPUs isn't sustainable (AMD pays way less per chip with the chiplet design and is far more flexible).

Common W for AMD

Only downside if integrated graphics becomes a thing is that you can’t upgrade if the next gen needs a different motherboard. Pretty easy to swap from a 2080 to a 3080.

Integrated graphics is already a thing. Intel iGPU has over 60% market share. This is really competing with Intel and low-end discrete GPUs. Nice to have the option!

Yeah, I know integrated graphics is a thing. And that’s been fine for running a web browser, watching videos, or whatever other low-demand graphical application was needed for office work. Now they’re holding it up against gaming, which typically places large demands on graphical processing power.

The only reason I brought up what I did is because it’s an if… if people start looking at CPU integrated graphics as an alternative to expensive GPUs it makes an upgrade path more costly vs a short term savings of avoiding a good GPU purchase.

Again, if one’s gaming consists of games that aren’t high demand like Fortnite, then upgrades and performance probably aren’t a concern for the user. One could still end up buying a GPU and adding it to the system for more power assuming that the PSU has enough power and case has room.

AMD has been pretty good about this though, AM4 lasted 2016-2022. Compare to Intel changing the socket every 1-2 years, it seems.

Actually AMD is still releasing new AM4 CPUs now. 5700x3D was just announced.

Oh, now that sounds like something I might like

I don't have the fastest RAM out there, so whenever I upgrade from my 1600, I want an X3D variant to help with that

That's true but I'm excited about the future of laptops. Some of the specs are getting really impressive while keeping low power draw. I'm currently jealous of what Apple has accomplished with literal all day battery life in a 14inch laptop. I'm hopeful some of the AMD chips will get us there in other hardware.

Could you not just slot in a dedicated video card if you needed one, keeping the integrated as a backup?

And the shared RAM. Games like Star Trek Fleet Command will crash your computer by messing with that/memory leaks galore. Far less crashy with a dedicated GPU. How many other games interact poorly with integrated GPUs?

Oh, oh ok I thought one of the new Threadrippers is so powerful that the CPU can do all those graphics in Software.

It's gonna take decades to be able to render 1080p CP2077 at an acceptable frame rate with just software rendering.

It's all software, even the stuff on the graphics cards. Those are the rasterisers, shaders and so on. In fact the graphics cards are extremely good at running these simple (relatively) programs in an absolutely staggering number of threads at the same time, and this has been taken advantage of by both bitcoin mining and also neural net algorithms like GPT and Llama.

It's a shame you're being downvoted; you're not wrong. Fixed-function pipelines haven't been a thing for a long time, and shaders are software.

I still wouldn't expect a threadripper to pull off software rendering a modern game like Cyberpunk, though. Graphics cards have a ton of dedicated hardware for things like texture decoding or ray tracing, and CPUs would need to waste even more cycles to do those in software.

For people like me who game once a month, and mostly stupid little game, this is great news. I bet many people could use that, it would reduce demand for graphic card and allow those who want them to buy cheaper.

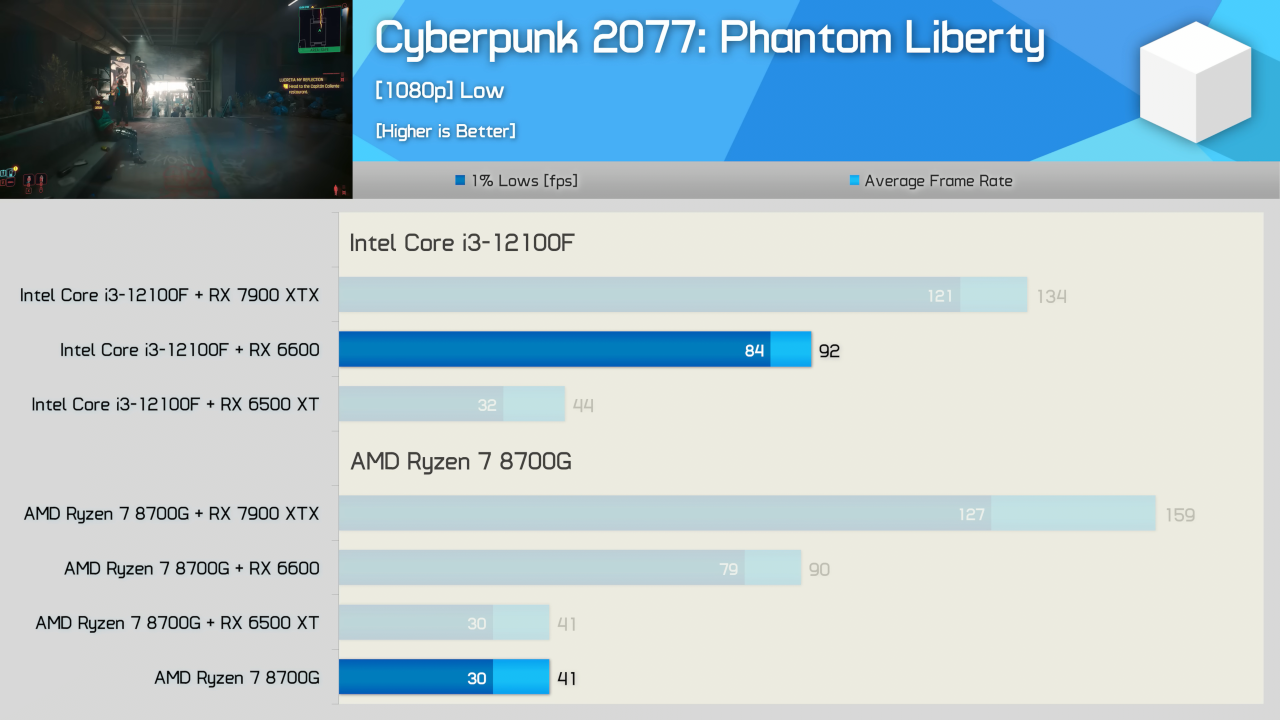

Mind you that it can get these frame rates at the low setting. While this is pretty damn impressive for a APU, it's still a very niche market type of APU at this point and I don't see this getting all that much traction myself.

I think the opposite is true. Discrete graphics cards are on the way out, SoCs are the future. There are just too many disadvantages to having a discrete GPU and CPU each with it’s own RAM. We’ll see SoCs catch up and eventually overtake PCs with discrete components. Especially with the growth of AI applications.

A bit misleading, what is meant is that no dedicated GPU is being used. The integrated GPU in the APU is still a GPU. But yes, AMD's recent APUs are amazing for folks who don't want to spend too much to get a reasonable gaming setup.

Wow, it's almost like that's why they said you didn't need a graphics card, instead of saying you didn't need a GPU!

Because the title is still vague, and yes GPU and "graphics card" are often used interchangeably by the internet (examples: https://www.hp.com/gb-en/shop/tech-takes/integrated-vs-dedicated-graphics-cards and https://www.ubisoft.com/en-us/help/connectivity-and-performance/article/switching-to-your-pcs-dedicated-gpu/000081045 ).

"New CPU hits 132fps" could wrongly suggest software rendering, which is very different (see for example https://www.gamedeveloper.com/game-platforms/rad-launches-pixomatic----new-software-renderer ) and died more than a decade ago.

Up until the G in 8700G I totally thought 'software renderer' and was hella impressed. So yea, totally plausible it could have been described better.

Software rendering hasn’t worked in 99% of games made on the last 15+ years. Only the super under low fi hipster stuff would be fine without 3D acceleration.

Yeah slightly misleading but I guess they did mention a card specifically, not GPU.

But for a moment I was like wow, 100FPS in software rendering, that's impressive even for an EPYC.

But for a moment I was like wow, 100FPS in software rendering

Thank you, that exactly was my point.

They state in the title and description graphics card, not GPU. Implying a dedicated graphics solution not an integrated one.

I can see Single Board Conputers with this on for powerful TV boxes. hello Emulators and Steam OS‽

That's what the article says...

$US330 for the top 8700G APU with12 RDNA 3 compute units (compare to 32 RDNA 3 CUs in the Radeon RX7600). And it only draws 88W at peak load and can be passively cooled (or overclocked).

$US230 for the 8600G with 8 RDNA 3 CUs. Falls about 10-15% short of 8700G performance in games, but a much bigger spread in CPU (Tom's Hardware benchmarks) so I'm pretty meh on that one.

Given the higher costs for AM5 boards and DDR5 RAM, you could spend about the same or $100-200 more than an 8700G build you could combine a cheaper CPU and better GPU and get way more bang for your buck. But I see the 8700G being an solid option for gamers on a budget, or parents wanting to build younger kids their first cheap-but-effective PC.

I also see this as a lazy mans solution to building small form factor mini-ITX Home Theatre PCs that run silent and don't need a separate GPU to receive 4K live streams. I'm exactly in this boat right now where I literally don't wanna fiddle with cramming a GPU into some tiny box, but also don't want some piece of crap iGPU in case I use the HTPC for some light gaming from time to time.

That's pretty damn impressive. AMD is changing the game!

Meh. It's also a $330 chip...

For that price you can get a 12th gen i3/RX6600 combination which will obliterate this thing in gaming performance.

Your i3 has half the cores. Spending more on GPU and less on CPU gives better fps, news at 11.

So what's the point of this thing then?

If you just want 8 cores for productivity and basic graphics, you're better off getting a Ryzen 7 7700, which is not gimped by half the cache and less than half the PCIe bandwith and for gaming, even the shittiest discrete GPUs of the current generation will beat it if you give it a half decent CPU.

This thing seems to straddle a weird position between gaming and productivity, where it can't do either really well. At that pricepoint, I struggle to see why anyone would want it.

It's like that old adage: there are no bad CPUs only bad prices.

It's about the same performance as a 1050ti, which is a 2016 gpu. It's still very much behind entry level discrete gpus like Rx 6600.

Might make sense for a laptop or mini pc, but dont really see the point for desktop considering the price.

I think I might be the target market. I'm very happy with my 1070. I need a CPU and mobo upgrade imminently. I might just snag this and not think about a discrete GPU for a while.

The playstation 5 also does this.

So will this be a HTPC king? Kind of skimped on the temps in the article. I assume HWU goes over it and will watch it soon.

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related news or articles.

- Be excellent to each other!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, this includes using AI responses and summaries. To ask if your bot can be added please contact a mod.

- Check for duplicates before posting, duplicates may be removed

- Accounts 7 days and younger will have their posts automatically removed.