Why the hell can't we just have both? One of the biggest problems with smart speakers and voice assistants is that they're so damn stupid so often. If A.I. were to become smart enough to be what the current assistants/speakers aren't, surely that would drive device sales and engagement astronomically higher right?

That would be the goal. The tricky part is matching intents that align with some API integration to whatever psychobabble the LLM spits out.

In other words, the LLM is just predicting the next word, but how do you know when to take an action like turning on the lights, ordering a pizza, setting a timer, etc. The way that was done with Alexa needs to be adapted to fit with the way LLMs work.

Microsoft seems to be attempting this with the new Copilot in Windows. You can ask it to open applications, etc., and also chat with it. But it is still pretty clunky when it comes to the assistant part (e.g. I asked it to open my power settings and after a bit of to and fro it managed to open the Settings app, after which I had to find the power settings for myself). And they're planning to charge for it, starting at an outrageous $30 per month. I just don't see that it's worth that to the average user.

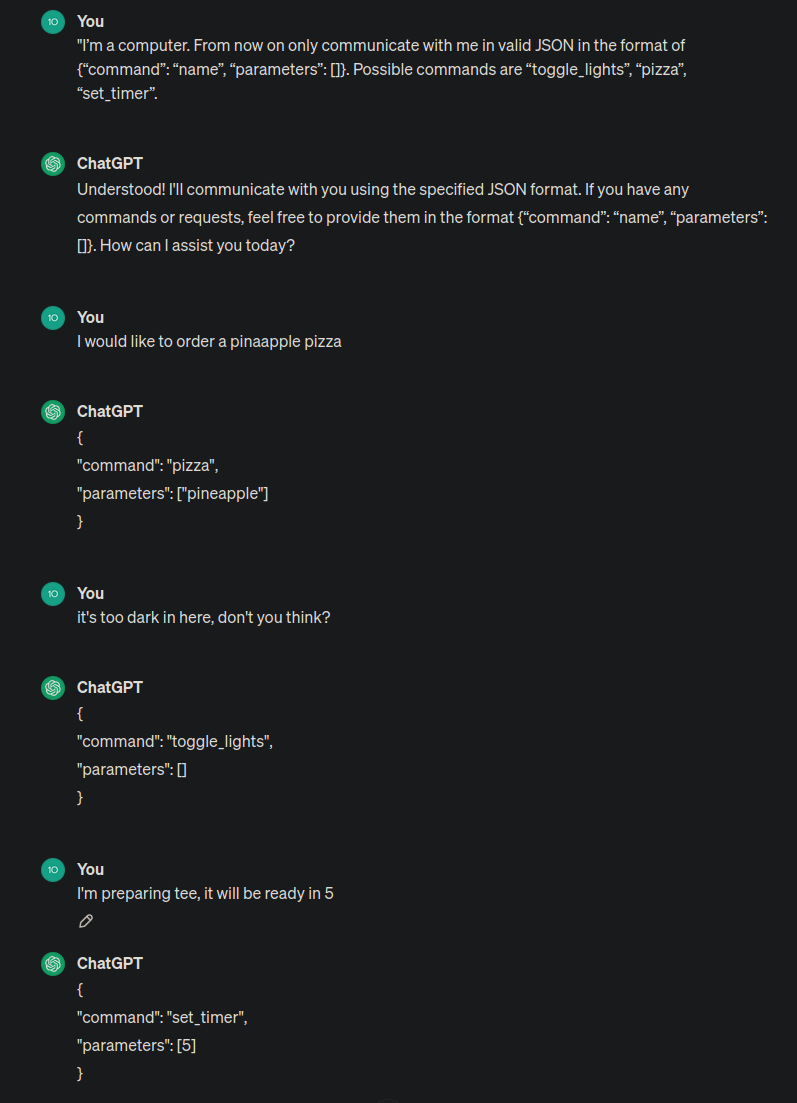

It's actually fairly easy. "I'm a computer. From now on only communicate with me in valid JSON in the format of {"command": "name", "parameters": []}. Possible commands are "toggle_lights", "pizza", "set_timer". And so on and so on. Current models are remarkably good at responding with valid JSON, I didn't have any issues with that. They will still hallucinate about details (like what it would do if you try to set up a timer for pizza?) but I'm sure you can train those models to address those issues. I was thinking about doing a OpenAI/google assistant bridge myself for spotify. Like "Play me that Michael Jackson song with that videoclip with monsters". Current assistant can't handle that but you can just ask chatGPT for the name of the song and then pass it to the assistant. This is what they have to do but on a bigger scale.

I just tried the new OpenAI voice conversation feature and thought about this too. It's everything I had hoped and dreamed that voice assistants would be when they first came out. It's really surprising that the ones from huge tech companies suck so much.

The tech to make them as good as what you just tried only came about more recently.

Voice assistants, particularly Siri, are structured in a VERY different way.

Because the elephant in the room is that AI isn't actually AI but is a huge database of internet and creative content combined with a language processing tool that takes its best guess at how to respond with that information to you.

AI today is just linear algorithms with bigger faster databases.

We can't have both because Alexa's job is not to give customers a good experience, it's to make them comfortable re-ordering Tide Pods with their voice.

Even households with Prime and an eco in every room don't trust that bitch with their credit card. Making her smart won't fix that; she's a failure.

Damn voice assistants are going to be our memory of the 2010s like car phones were to the 80s.

This is the best summary I could come up with:

Amazon is going through yet another round of layoffs, reports Computerworld, and once again the company’s devices-and-services division appears to be bearing the brunt of it.

The layoffs will primarily affect the team working on Alexa, the Amazon voice assistant that drives the company's Echo smart speakers and other products.

"Several hundred roles are impacted," the company said in a statement, "a relatively small percentage of the total number of people in the Devices business who are building great experiences for our customers."

Amazon hasn't released an AI-powered version of Alexa yet, but it showed "an early preview" of its efforts in September, "based on a new large language model that's been custom-built and specifically optimized for voice interactions."

But the hardware is sold at cost, and people interact with Alexa mainly to play music or check the weather, not to spend money on Amazon or anywhere else.

It has been a tough year for Amazon's devices division, which has already borne a large share of the 27,000 layoffs that the company has announced in the last 12 months.

The original article contains 460 words, the summary contains 179 words. Saved 61%. I'm a bot and I'm open source!

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed