Multiple TB when setting up a new server to mirror an existing one. (Did an initial copy with both together in the same room, before moving the clone to a physically separate location. Doing that initial copy would saturate the network connection for a week or more otherwise)

Rsynced 4.2TB of data from one server to another but with multiple files

~340GB, more than a million small files (~10KB or less each one). It took like one week to move because the files were stored in a hard drive and it was struggling to read that many files.

I've imaged an entire 128GB SSD to my NAS...

Around 15 TB migrating to a new NAS.

I did 100TB, 100 streams of 1TB, all simultaneous with rsync

I mean dd claims they can handle a quettabyte but how can we but sure.

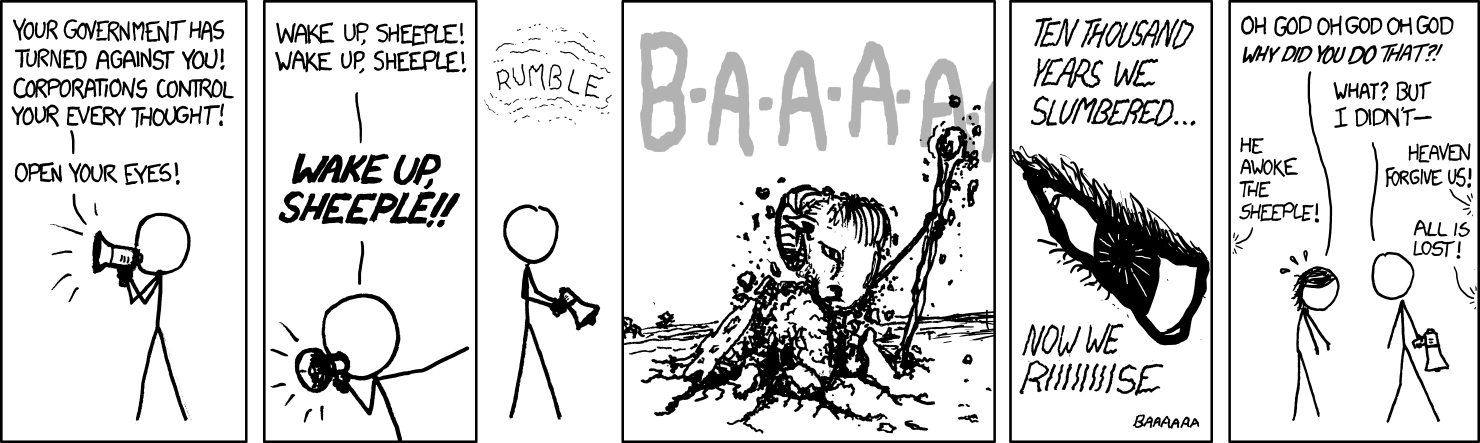

dd can’t really handle quettabytes! GNU has taken us all for fools! Alert the masses! Wake up sheeple!

You should ping CERN or Fermilab about this. Or maybe the Event Horizon Telescope team but I think they used sneakernet to image the M87 black hole.

Anyway, my answer is probably just a SQL backup like everyone else.

Today I've migrated my data from my old zfs pool to a new bigger one, the rsync of 13.5TiB took roughly 18 hours. It's slow spinning disks storage so that's fine.

The second and third runs of the same rsync took like 5 seconds, blazing fast.

Local file transfer?

I cloned a 1TB+ system a couple of times.

As the Anaconda installer of Fedora Atomic is broken (yes, ironic) I have one system originally meant for tweaking as my "zygote" and just clone, resize, balance and rebase that for new systems.

Remote? 10GB MicroWin 11 LTSC IOT ISO, the least garbage that OS can get.

Also, some leaked stuff 50GB over Bittorrent

I once robocopied 16tb of media

Do cloud platform storage operations count? If so, in the hundreds of terabytes (work)

Why would dd have a limit on the amount of data it can copy, afaik dd doesn't check not does anything fancy, if it can copy one bit it can copy infinite.

Even if it did any sort of validation, if it can do anything larger than RAM it needs to be able to do it in chunks.

No, it can't copy infinite bits, because it has to store the current address somewhere. If they implement unbounded integers for this, they are still limited by your RAM, as that number can't infinitely grow without infinite memory.

80GB, it was 8 hours of (supposedly) 4k content in the MP4 format. https://www.youtube.com/watch?v=VF5JWdaJlvc Here's the link (hoping for the piped bot to appear).

Probably ~15TB through file-level syncing tools (rsync or similar; I forget exactly what I used), just copying my internal RAID array to an external HDD. I've done this a few times, either for backup purposes or to prepare to reformat my array. I originally used ZFS on the array, but converted it to something with built-in kernel support a while back because it got troublesome when switching distros. Might switch it to bcachefs at some point.

With dd specifically, maybe 1TB? I've used it to temporarily back up my boot drive on occasion, on the assumption that restoring my entire system that way would be simpler in case whatever I was planning blew up in my face. Fortunately never needed to restore it that way.

I recently copied ~1.6T from my old file server to my new one. I think that may be my largest non-work related transfer.

Linux

From Wikipedia, the free encyclopedia

Linux is a family of open source Unix-like operating systems based on the Linux kernel, an operating system kernel first released on September 17, 1991 by Linus Torvalds. Linux is typically packaged in a Linux distribution (or distro for short).

Distributions include the Linux kernel and supporting system software and libraries, many of which are provided by the GNU Project. Many Linux distributions use the word "Linux" in their name, but the Free Software Foundation uses the name GNU/Linux to emphasize the importance of GNU software, causing some controversy.

Rules

- Posts must be relevant to operating systems running the Linux kernel. GNU/Linux or otherwise.

- No misinformation

- No NSFW content

- No hate speech, bigotry, etc

Related Communities

Community icon by Alpár-Etele Méder, licensed under CC BY 3.0