763

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

this post was submitted on 05 Feb 2024

763 points (100.0% liked)

Memes

52836 readers

145 users here now

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

founded 6 years ago

MODERATORS

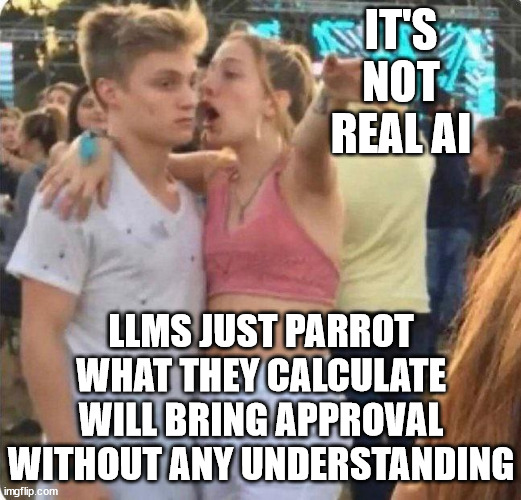

I find this line of thinking tedious.

Even if LLM's can't be said to have 'true understanding' (however you're choosing to define it), there is very little to suggest they should be able to ~~understand~~ predict the correct response to a particular context, abstract meaning, and intent with what primitive tools they were built with.

If there's some as-yet uncrossed threshold to a bare-minimum 'understanding', it's because we simply don't have the language to describe what that threshold is or know when it has been crossed. If the assumption is that 'understanding' cannot be a quality granted to a transformer-based model -or even a quality granted to computers generally- then we need some other word to describe what LLM's are doing, because 'predicting the next-best word' is an insufficient description for what would otherwise be a slight-of-hand trick.

There's no doubt that there's a lot of exaggerated hype around these models and LLM companies, but some of these advancements published in 2022 surprised a lot of people in the field, and their significance shouldn't be slept on.

Certainly don't trust the billion-dollar companies hawking their wares, but don't ignore the technology they're building, either.

You are best off thinking of LLMs as highly advanced auto correct. They don't know what words mean. When they output a response to your question the only process that occurred was "which words are most likely to come next".

And we all know how often auto correct is wrong

Yep. Been having trouble with mine recently, it's managed to learn my typos and it's getting quite frustrating

Did you mean "shouldn't"? Otherwise I'm very confused by your response

No, i mean 'should', as in:

It's like using a description for how covalent bonds are formed as an explanation for how it is you know when you need to take a shit.

Fair enough, that just seemed to be the opposite point that the rest of your post was making so seemed like a typo.

I don't think so...